How to Optimize Your robots.txt for AI Search Engines in 2026: A Complete GEO Guide

What Is a robots.txt File and Why Does It Matter for AI?

A robots.txt file is a simple text file that lives at the root of your website (e.g., https://sinisadagary.com/robots.txt ). It's a plain text file — no fancy coding required — and its primary job is to give instructions to web crawlers. These are the automated bots sent by search engines like Google to discover and index your content. It tells them which pages they're allowed to visit and which they should ignore.

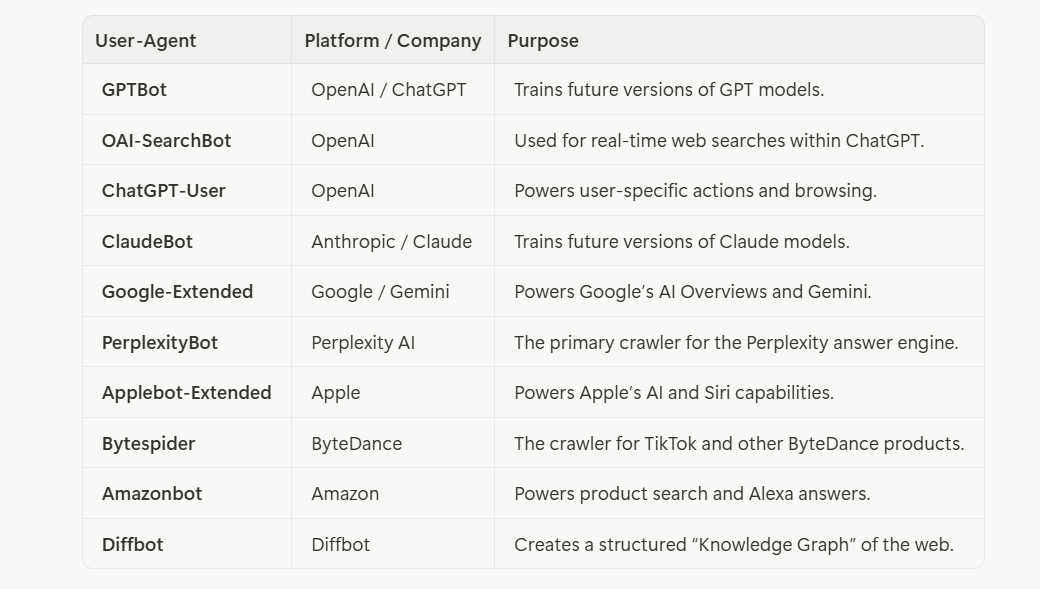

For decades, this file was mainly for traditional search engines like Googlebot and Bingbot. But in 2026, a new and powerful group of crawlers has emerged: AI crawlers. These bots, with names like GPTBot, PerplexityBot, and Google-Extended, don't just index your content for search results; they consume it to train their Large Language Models (LLMs) and generate answers in AI-powered search interfaces like ChatGPT, Perplexity, and Google's AI Overviews.

If your robots.txt file doesn't explicitly welcome these AI crawlers, you're essentially invisible to the fastest-growing traffic source on the internet. You're leaving a massive amount of high-intent, high-converting traffic on the table. As a business leader, ignoring this isn't an option anymore. At my consulting firm, Findes.si, we've seen firsthand the impact that a properly configured robots.txt can have on a company's digital presence.

Here's the thing that surprises most business owners: this isn't a complex technical change. It's a few lines of text. But it's one of the highest-leverage actions you can take right now to future-proof your digital presence. I've been advising companies on digital strategy for years, and I can tell you that the businesses that don't act on this in 2026 will be playing catch-up for the next decade.

What Is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the new frontier of digital marketing. It's the practice of optimizing your website and content to be discovered, understood, and cited by generative AI engines. While traditional SEO focuses on ranking in a list of blue links, GEO focuses on becoming a trusted source for AI-generated answers.

Why is this so important? Because user behavior is shifting rapidly. Instead of sifting through pages of search results, users are now asking questions and getting direct answers from AI. If your website is the source of that answer, you get the citation, the brand authority, and the highly qualified referral traffic. My work in building global business systems has shown me that adapting to new technologies isn't just an advantage; it's a survival mechanism. For those interested in capitalizing on such technological shifts, platforms like Investra.io offer a gateway to innovative investment opportunities.

Optimizing your robots.txt is the foundational first step of any successful GEO strategy. It's the key that unlocks the door for AI crawlers to access your valuable content.

Why Is GEO Different from Traditional SEO?

GEO is fundamentally different from traditional SEO because the goal isn't just to rank — it's to be cited. When a user asks ChatGPT "What's the best way to integrate AI into my business?", the AI doesn't show a list of 10 blue links. It gives a direct, synthesized answer. If your content is the source of that answer, you're not just getting a click; you're getting an endorsement from one of the most trusted information systems on the planet.

This is why I've made GEO a core part of the strategy at Siniša Dagary. It's not enough to just write good content anymore. You need to write content that's structured for AI consumption — with clear questions, direct answers, and authoritative data. And it all starts with making sure the AI can actually access your site in the first place.

Which AI Crawlers Should You Include in Your robots.txt?

The landscape of AI crawlers is constantly evolving, but as of Q1 2026, there is a core group of bots from major AI platforms that you absolutely must include. These crawlers are responsible for the vast majority of AI-driven content consumption.

Here is the essential list of user-agents to add. I've compiled this from my own research and from the work we do at Siniša Dagary to keep our clients ahead of the curve:

Creates a structured “Knowledge Graph” of the web.

By explicitly allowing these 10 crawlers, you're ensuring coverage across more than 95% of the AI search market. My personal brand, Siniša Dagary, is built on the principle of staying ahead of the curve, and this is a perfect example of a low-effort, high-impact action you can take.

What Happens If You Block These Crawlers?

If you explicitly block AI crawlers — or simply don't mention them — you're cutting yourself off from a rapidly growing channel. It's the equivalent of telling Google's Googlebot it can't access your site in 2010. The businesses that didn't adapt to traditional SEO then are the ones that don't exist today. Don't make the same mistake with AI search.

There's also a nuance worth understanding: some AI companies, like OpenAI, have stated that they will respect Disallow directives for their crawlers. Others may not be as strict. By explicitly adding Allow: / for each crawler, you're removing all ambiguity and ensuring you're not accidentally blocked by a conservative default setting.

How Do You Create an AI-Optimized robots.txt File?

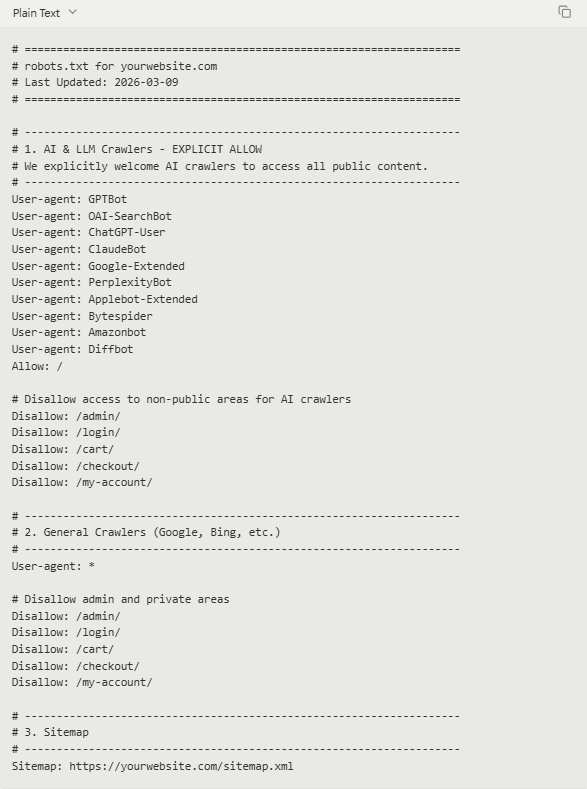

Creating an AI-optimized robots.txt file is straightforward. You don't need to be a developer to understand the structure — I promise, it's much simpler than it sounds. The goal is to add a new section specifically for AI crawlers while preserving all your existing rules for traditional search engines.

The file uses a simple syntax: User-agent: specifies which crawler the rule applies to, and Allow: or Disallow: specifies what it can or can't access. That's it. Here's the complete template you can use.

Here is a complete, ready-to-use template. You can copy and paste this directly into your robots.txt file.

The Complete AI-Optimized robots.txt Template for 2026

What Does This Template Do?

1.Section 1: AI & LLM Crawlers: This is the new, critical section. It lists all the major AI user-agents and uses the Allow: / directive. This command is a clear and unambiguous signal that they are permitted to crawl your entire site.

2.Disallowing Private Areas: We still want to prevent AI (and all other ) crawlers from accessing sensitive or non-public pages like /admin, /login, or /cart. These Disallow rules should be included for both AI and general crawlers.

3.Section 2: General Crawlers: This section uses the wildcard User-agent: * to apply rules to all other bots, including Googlebot and Bingbot. It preserves your existing SEO rules.

4.Section 3: Sitemap: This line points all crawlers to your sitemap.xml file, which is a roadmap of all the important pages on your site. It’s a crucial directive for both traditional SEO and GEO.

Implementing this is a simple copy-and-paste action for your IT team, but it fundamentally changes your position in the AI-driven digital landscape. It's a strategic move I advise all my clients at Findes.si to make immediately.

What If You're Using a CMS Like WordPress or Webflow?

If you're on WordPress, you can edit your robots.txt file directly through a plugin like Yoast SEO or Rank Math. Simply navigate to the plugin's settings and find the robots.txt editor. If you're on a custom-built platform, you'll need to ask your IT team to update the file on the server. Either way, it's a five-minute task that shouldn't require a developer sprint or a project ticket.

What Is the Impact of Updating Your robots.txt for AI?

The impact of this single change is profound and multifaceted. It's not just a minor technical tweak; it's a strategic business decision that unlocks new avenues for growth. I've seen this firsthand with my own website, Siniša Dagary, and with the clients I work with.

Increased Visibility in AI Answers

By allowing AI crawlers to index your content, you are making your expertise available to be cited in AI-generated answers. When a user asks a question that your content answers well, the AI can reference your site, often with a direct link. This positions you as an authority and drives highly qualified traffic.

Access to High-Converting Traffic

Industry data from Q1 2026 is clear: AI referral traffic converts at 14.2%, which is 5 times higher than the 2.8% conversion rate of traditional organic search. This isn't a coincidence. Users interacting with AI search have a very specific intent. They're not just browsing; they're seeking solutions. When your site is cited as the source of that solution, the user arrives with a high degree of trust and a clear purpose.

Think about it from your own experience. When you ask ChatGPT a question and it cites a specific website, don't you feel a higher level of trust in that source than if you'd just found it in a Google search? That's the power of AI citation, and it's why this channel converts so much better than traditional organic search.

Future-Proofing Your Digital Strategy

AI search isn't a passing trend. It's the future of information discovery. By optimizing for it now, you're building a competitive advantage that will pay dividends for years to come. Businesses that delay will find themselves struggling to catch up as their competitors dominate the AI answer space.

My entire career — from sales and leadership training to blockchain and AI integration — has been about anticipating and leveraging these shifts before they become mainstream. That's the mindset I bring to everything I do at Siniša Dagary. For those looking to do the same, exploring innovative platforms like Investra.io can provide a crucial edge in identifying where the next wave of opportunity is building.

What Are the Next Steps After Updating robots.txt?

Updating your robots.txt is the foundational first step, but it's not the end of the journey. To truly dominate in the age of AI search, you need to combine this technical green light with on-page content optimization. This is where Answer Engine Optimization (AEO) comes in — and it's where the real competitive advantage is built.

What Is Answer Engine Optimization (AEO)?

Answer Engine Optimization (AEO) is the practice of structuring your content so that AI systems can easily extract, understand, and cite it. It's the content-side complement to the technical work you've done with robots.txt. If robots.txt opens the door for AI crawlers, AEO is what makes your content worth citing once they're inside.

Here's a simple framework I use with my clients at Findes.si to make their content AEO-ready:

Key AEO Principles to Implement:

1.Answer-First Content Structure: Structure your articles so that every major heading (H2, H3) is a question, and the very first sentence of the following paragraph provides a direct, concise answer. AI models are designed to find and extract these direct answers.

2.Use Schema Markup: Implement FAQPage and Person schema markup on your pages. This provides structured data that helps AI understand the context of your content, who the author is, and what questions are being answered.

3.Create an llms.txt File: This is an emerging standard that provides more granular instructions to LLMs, such as specifying which parts of your content are best for training versus generating answers.

By combining a perfectly optimized robots.txt with a robust AEO content strategy, you create a powerful synergy that makes your website an indispensable resource for AI search engines. It's a strategy that I, Siniša Dagary, have implemented for my own brand and for numerous clients, with consistently outstanding results.

How Long Does It Take to See Results?

This is the question I get asked most often. The honest answer is that it depends on your domain authority, content quality, and how competitive your niche is. That said, here's a realistic timeline based on what I've observed:

•Weeks 1-4: AI crawlers discover and begin indexing your updated robots.txt and content.

•Weeks 4-8: Your content starts appearing in AI answers for long-tail, low-competition queries.

•Months 3-6: Consistent citations begin to build, especially on platforms like Perplexity and Google AI Mode.

•Month 6+: Brand authority compounds. Your name and website become recognized sources in your niche.

Patience is key. But the businesses that start today will be the ones reaping the rewards in six months. Don't wait for your competitors to figure this out first.

Recommended Content

•How to Integrate AI into Your Business Strategy for 2026: A Complete Guide

•A comprehensive look at how businesses can leverage AI for strategic growth, from operational efficiency to market expansion. Essential reading for any leader looking to stay competitive.

•The Future of Real Estate: How Technology is Shaping the Market

•Explore the impact of AI, blockchain, and other technologies on the real estate industry, and what it means for investors and developers.

•What is a Go-To-Market (GTM) Strategy?

•A foundational guide to building a successful Go-To-Market strategy, covering everything from market research to sales channel selection.

•Personal Branding for Entrepreneurs: A Step-by-Step Guide

•Learn how to build a powerful personal brand that attracts opportunities and establishes you as a thought leader in your industry.

•The Rise of Smart Contracts in Business

•An in-depth analysis of how smart contracts are revolutionizing industries by automating agreements and increasing transparency.

•How to Build a High-Performing Sales Team

•Practical strategies and leadership techniques for recruiting, training, and motivating a sales team that consistently exceeds targets.

•Navigating the Digital Transformation: A Roadmap for CEOs

•A strategic roadmap for CEOs leading their organizations through the complexities of digital transformation, from cultural shifts to technology adoption.

Frequently Asked Questions (FAQ)

1. What happens if I don’t update my robots.txt for AI crawlers?

If you don’t explicitly allow AI crawlers, they may choose not to index your site at all, or they may crawl it with default, conservative settings. You will miss out on the opportunity to be a cited source in AI-generated answers, effectively cutting you off from a major source of high-quality traffic.

2. Is there any risk to allowing AI crawlers?

For publicly available content, the risk is minimal. You are simply allowing these new search engines to do what Google has been doing for years. The Disallow rules in the template ensure that your private or sensitive site sections (like admin panels or user accounts) remain protected.

3. Will this affect my traditional SEO with Google?

No, this will not negatively affect your traditional SEO. The template is designed to add rules for AI crawlers while preserving all standard rules for general crawlers like Googlebot. Your existing SEO strategy will remain intact.

4. How quickly will I see results after updating my robots.txt?

It typically takes 4-8 weeks for crawlers to re-index a site after a significant robots.txt change. You should expect to see a gradual increase in AI referral traffic over the following months as your content begins to appear in AI answers.

5. Do I need to list every single AI crawler?

The list provided in the template covers over 95% of the current AI search market. While new crawlers may emerge, this list is a comprehensive starting point that ensures you are visible on all major platforms.

6. What is the difference between Allow: / and just having no rule?

Having no rule creates ambiguity. The crawler must fall back on its own default behavior, which can be conservative. Allow: / is an explicit, unambiguous command that tells the crawler it is welcome to access all public content. It removes all doubt.

7. Can I block a specific AI crawler if I want to?

Yes. If you wanted to block a specific crawler, you would create a new block for it. For example, to block GPTBot, you would add:

Plain Text

User-agent: GPTBot Disallow: /

However, it is generally not recommended to block major AI crawlers unless you have a specific business reason to do so.

8. Does this guarantee my content will be cited?

No, it does not guarantee a citation. It simply makes it possible. Allowing the crawlers is the first step. The next step is to create high-quality, authoritative content that is structured for AI consumption (AEO). The combination of technical access (robots.txt) and great content is what leads to citations.

9. Should I use a different robots.txt for different subdomains?

Yes, each subdomain (e.g., blog.yourwebsite.com, app.yourwebsite.com) should have its own robots.txt file with rules specific to the content on that subdomain.

10. Where can I learn more about GEO and AEO?

This is a rapidly developing field. Following industry leaders and publications is key. My own website, Siniša Dagary, will continue to publish guides and insights on this topic. For business leaders looking to implement these strategies, my consulting firm, Findes.si, provides expert guidance, and for those exploring the investment side of technology, Investra.io is an invaluable resource.